TWO recent court cases in the US have marked significant moments in the battle to keep young people safe online.

First, Meta was fined hundreds of millions of dollars for misleading users over the safety of its platforms for children. Then Meta and Google were sued for damaging a woman’s mental health in childhood through addictive platform designs.

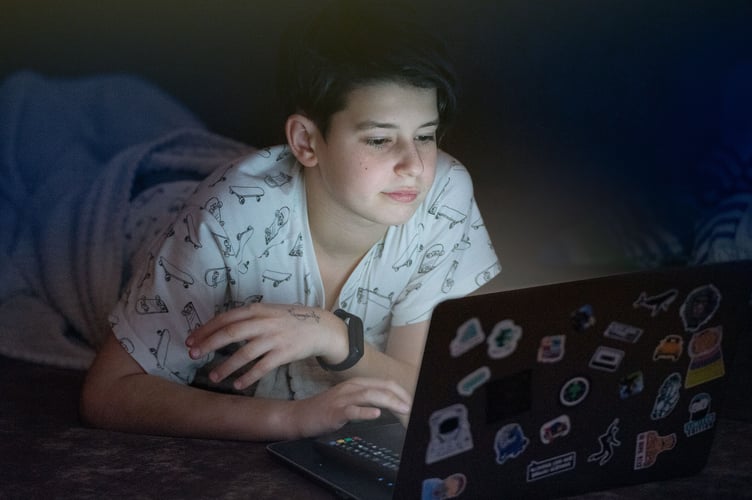

These cases prove that children and families are being failed by tech companies, who continue to expose young users to sexually explicit material, contact with dangerous adults and other preventable risks on their platforms.

Many people in the UK are calling for a complete ban on social media for under 16s, and while that would be better than the status quo, the NSPCC believes the government should take a more ambitious approach, which tackles the root causes of harm to children online head on.

Ensuring tech companies build safety into every device, platform and AI tool from day one is the best way to prevent children seeing harmful or illegal content, and to ensure companies are only able to offer children tailored, age-appropriate services.

In addition, they must ban the use of addictive design tricks which keep young users gaming, scrolling and watching for hours on end.

This technology is already available to these companies and easily added to existing devices and platforms. Choosing inaction is not only failure of responsibility, it is a choice to continue putting children at risk.

The government has opened public consultation to help guide their next steps to help keep young people out of harm’s way online and demanding safer services for children.

We would encourage everyone reading this to have their say and urge the Government to act decisively and ensure the safety of children online by holding tech firms to account.

To take part in the consultation, go to www.gov.uk and search 'social media consultation' .

Rani Govender

NSPCC Associate Head of Child Safety Online

Comments

This article has no comments yet. Be the first to leave a comment.